Overview

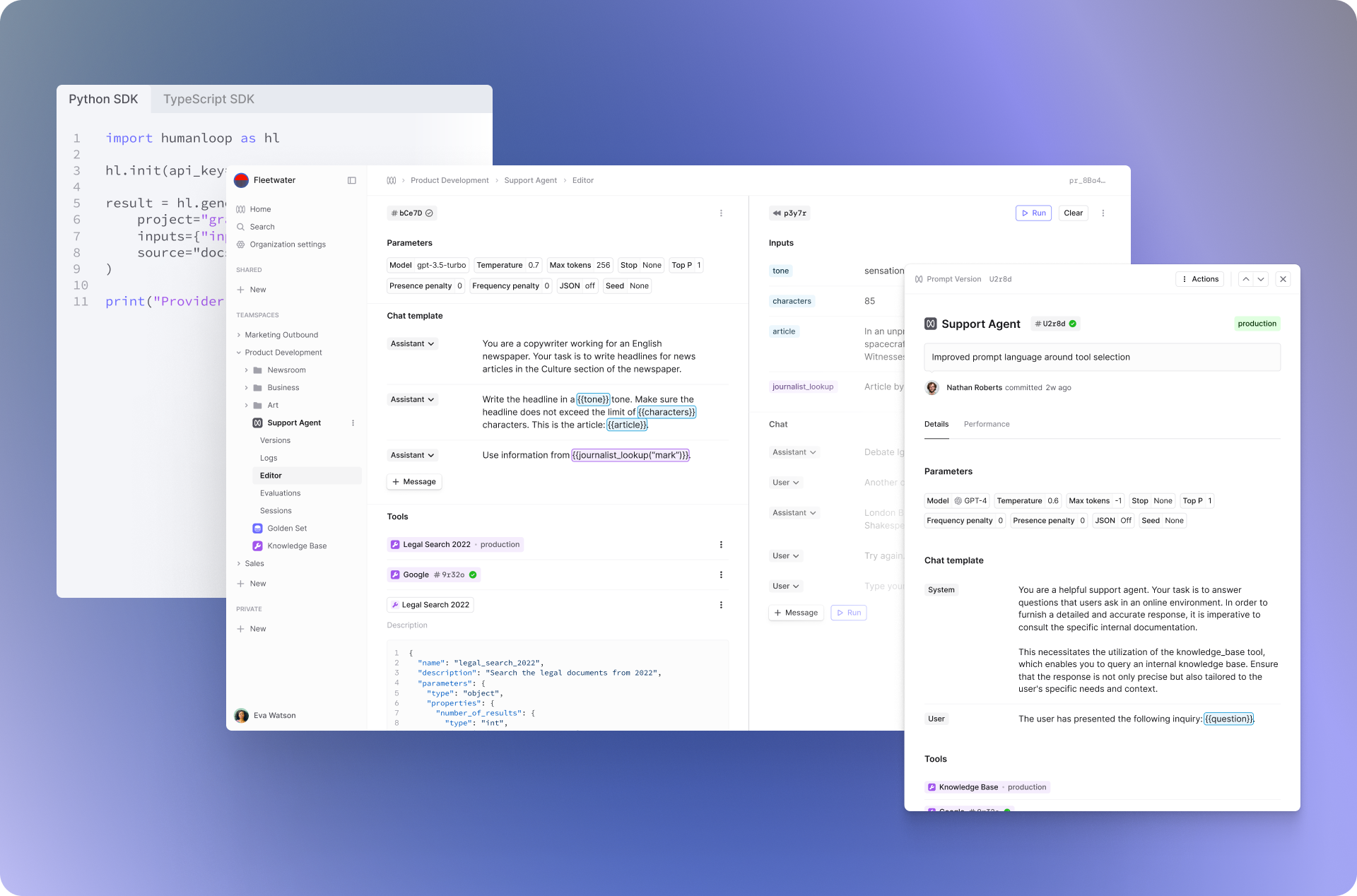

Humanloop is the Integrated Development Environment for Large Language Models

Humanloop enables product teams to develop LLM-based applications that are reliable and scalable.

Principally, it is an evaluation suite to enable you to rigorously measure and improve LLM performance during development and production, combined with a collaborative workspace where engineers, PMs and subject matter experts improve prompts, tools and agents together.

By adopting Humanloop, teams save 6-8 engineering hours each week through better workflows and they feel confident that their AI is reliable.

Humanloop's IDE for LLMs helps teams prompt engineer and evaluate LLM applications.

The power of Humanloop lies in its integrating evaluation, monitoring and prompt engineering in one platform.

Combining evaluation workflows and prompt development in the same place, lets you quickly iterate between understanding system performance and taking the actions needed to improve. Additionally, the SDK slots seamlessly into your existing code-based orchestration and the user-friendly interface allows both developers and non-technical stakeholders to adjust the AI together.

You can learn more about the challenges of AI development and how Humanloop solves them in Why Humanloop?.

Updated 5 days ago

Jump straight in by following our Quick Start guide or learn more about the Humanloop platform.